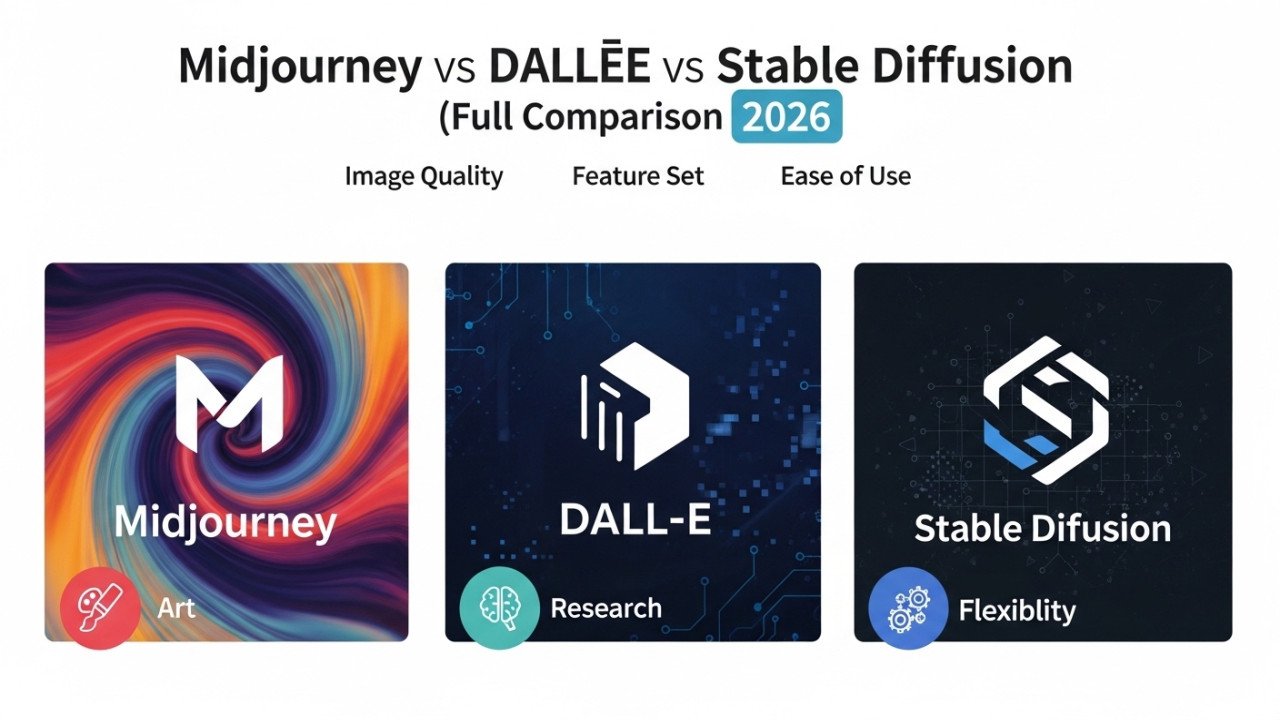

Midjourney vs DALL·E vs Stable Diffusion (Full Comparison 2026)

Midjourney vs DALL·E vs Stable Diffusion (Full Comparison 2026)

If you want the shortest answer, Midjourney is usually the best pick for artistic image quality, DALL·E is usually the best pick for ease of use and prompt accuracy, and Stable Diffusion is usually the best pick for customization, local control, and high-volume workflows. The right choice depends less on hype and more on whether you care most about visual polish, speed of iteration, prompt fidelity, or production control.

At a glance

Across current 2026 comparisons, Midjourney is consistently described as the strongest tool for visually striking outputs, DALL·E as the easiest and most accessible option, and Stable Diffusion as the most customizable platform with the widest workflow flexibility. Speed is less straightforward: DALL·E often returns results in roughly 10 to 30 seconds, Midjourney often lands around 15 to 60 seconds, depending on mode and upscaling, and Stable Diffusion can be extremely fast on a strong local GPU but much slower on weaker hardware or cloud setups.

| Tool | Image quality | Speed | Accuracy | Best fit |

|---|---|---|---|---|

| Midjourney | Usually the most polished and aesthetically appealing, especially for concept art, moodboards, and marketing visuals. | Typically slower than DALL·E in cloud use, especially when upscaling is included. | Good, but less precise than DALL·E for strict prompt following, exact counts, or text-heavy scenes. | Designers, marketers, creators, and teams that want “beautiful by default” results. |

| DALL·E | Clean, reliable, and strong for photorealistic or client-friendly outputs, though often less visually dramatic than Midjourney. | Commonly benchmarked around 10 to 30 seconds, though Zapier still describes the ChatGPT experience as slow because it generates one image at a time. | Best of the three for prompt adherence, readable text, and conversational edits. | Beginners, marketers, product teams, and anyone who wants fast prompting without a learning curve. |

| Stable Diffusion | Highest ceiling when tuned well, but default quality varies far more than Midjourney or DALL·E. | Fastest when run on capable local hardware, but overall speed depends heavily on GPU, settings, and implementation. | Accuracy is model-dependent and improves with tuning, but out of the box it is usually less predictable than DALL·E. | Developers, power users, agencies, and teams that need ControlNet, LoRA training, automation, or private local generation. |

Strengths and weaknesses

Midjourney’s main strength is aesthetic quality: multiple 2026 comparisons describe it as the best choice for artistic, polished, and scroll-stopping visuals, especially when you want strong textures, lighting, and color without much prompt engineering. Its main weaknesses are weaker text rendering, less precise control over details like spatial positioning, and a more limited customization model than Stable Diffusion.

DALL·E’s biggest strengths are accessibility, prompt fidelity, and text handling, with current reviews highlighting its conversational workflow, reliable editing, and strong performance on labels, signs, and mockups. Its main weakness is that the outputs can feel more generic or less “art directed” than Midjourney, and the ChatGPT workflow is less flexible than an open production pipeline.

Stable Diffusion’s biggest advantage is control: 2026 sources consistently highlight LoRA training, ControlNet, inpainting, outpainting, ComfyUI workflows, and local deployment as features that neither Midjourney nor DALL·E can match at the same level. Its weakness is that all that power comes with a steeper learning curve, more setup, and more variation in results unless you know which model, sampler, and workflow to use.

Side-by-side use cases

For marketing and social media, Midjourney is usually the strongest choice because it produces the most visually striking ad-style imagery with minimal effort, while DALL·E is often the better option when the graphic includes text, labels, or tighter prompt requirements. That is why many teams use Midjourney for the hero visual and DALL·E for fast mockups, slides, or client-facing comps.

For product photography and ecommerce, Stable Diffusion becomes much more attractive because custom LoRA training and automated workflows can generate repeatable product variants at scale, while Flux or DALL·E-style tools are often better for quick concepting without setup. For game art, concept art, and creative exploration, a hybrid workflow is common: Midjourney for early visual exploration, then Stable Diffusion for controlled iteration and consistency.

For UI mockups, posters, packaging, and any image that needs readable text, DALL·E has the clearest edge among these three because several sources point to stronger prompt adherence and more reliable text rendering. For privacy-sensitive work such as confidential client assets or internal product concepts, Stable Diffusion is the safest fit because it can run locally without sending assets to a cloud service.

Which one is best

Choose Midjourney if your priority is image quality first, especially for creative campaigns, editorial-style visuals, concept art, brand moodboards, and images that needimmediatet visual impact. Choose DALL·E if you want the easiest workflow, better prompt accuracy, stronger text inside images, and less friction for non-technical users.

Choose Stable Diffusion if you want professional control, local deployment, batch generation, custom model training, or a workflow that scales beyond simple prompting. If you only want one-line guidance: Midjourney is best for creators, DALL·E is best for general users, and Stable Diffusion is best for advanced production work.

Comments (0)

No comments found